Let’s build tech differently

There are new AI headlines every week & my thoughts keep evolving & deepening as it continues. So I just want to be extremely clear about where I stand with the state of technology.

I am not anti-AI.

I am not anti-tech.

I am not anti-algorithm.

I am against the reckless use of these tools. Especially when they are used to exploit people.

This is not something that is suddenly new with AI. If anything, AI is exposing what was already happening. It has forced people to confront how technology can shape behavior.

But this week, the major headlines that have caught my attention have been around the trial that is happening over social media addiction. For those who don't know about it, there is a trial happening in Los Angeles against Meta & Google, alleging that they have intentionally created products that were designed for young people to become addicted to.

In order to create a product for people to use, you have to think about human behavior & psychology. I can tell you that it is top of mind, especially at these larger tech companies. They do in-depth product design & planning cycles & you better believe that these topics come up.

Everyone is drawing the parallel between this & Big Tobacco in the 90's.

The thing about Silicon Valley is that things move extremely fast. Most teams are just focused on hitting their goals or metrics on any given quarter. Usually these metrics line up with usage & stickiness of their product. If a PM can say that 'X people' are using their feature every single day, that makes them look really good. Why not optimize for that? I don't think people set out to intentionally get people addicted or knew exactly what the long-term effects would be, but I do think that we do know now. We have seen so many studies & can just see how it influences most people's day-to-day.

Now, this isn't a bad thing to focus on stickiness, depending on the product, this can tie to the fact that you built something that is genuinely useful for people & that they have a healthy attachment to. With social media, we know that's not what is actively happening at these big tech companies. Most teams do not wake up intending harm. But when revenue, advertiser incentives, & shareholder pressure dominate decision-making, user wellbeing can become secondary. Users become just a metric. This is not a secret.

I've built a platform focused on music discovery through community, which means it's a social platform, & I've had to actively think about these things in depth. I know the mental health effects that social media has so I have optimized for different things. Why? Because I don't want to exploit people.

I am someone who is susceptible to addiction. I know this about myself & I have done a significant amount of work to have healthier relationships with things in my life. Because of that, I have to actively be conscious of this in my life so I don't ever want to perpetuate that with anyone else. It's a bitch ass move to constantly prey on people's weaknesses. Every time I think about psychological behaviors… I think about myself. Would I prey on the weakest version of myself?

This philosophy has directly shaped Pearl Music. My co-founder, Kayla, & I had a lot of discussions while designing some foundational aspects of Pearl. One being how we think about engagement & interactions within the app. How do we help people unlearn some of the other habits that have come from other social platforms?

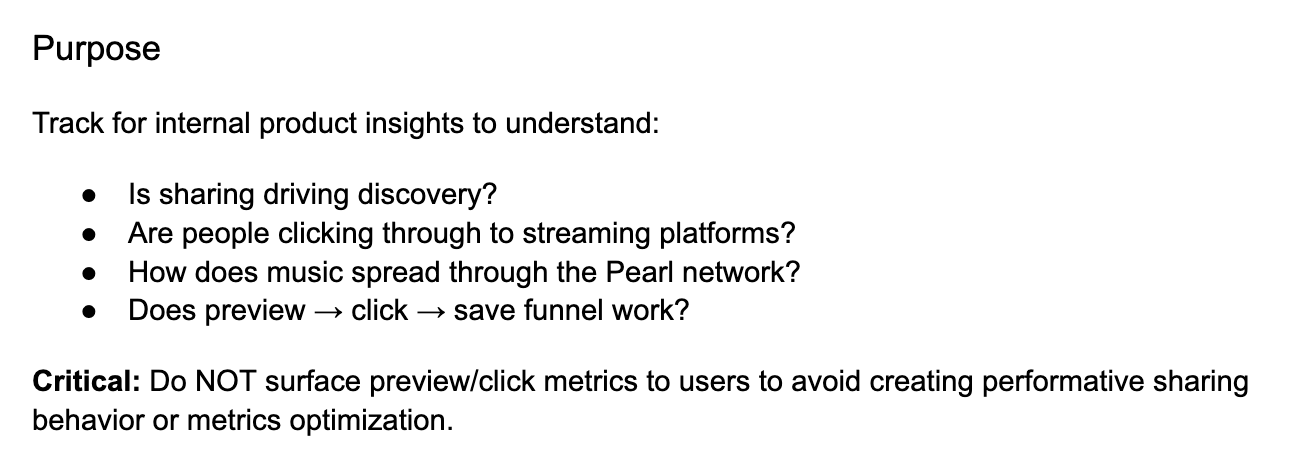

Screenshot directly from one of our design docs

We intentionally don’t have the ability to ‘like’ posts in Pearl. You can comment on your friend’s activity & you can ‘save’ their song (which is a metric only the user who shared the song can see). We do allow people to heart comments, but that came from a different place. It’s a lightweight way to acknowledge someone in a conversation, not a tool for chasing validation.

Why did we design it this way? Pearl isn't about performing your taste for more likes. It's your own scrapbook & expression of your music taste. To see who saved it, just allows you to see the influence you had with the song you posted. It's cool to see the network effects of your taste.

Here is the way I've been thinking about how AI & social media can be used, these are just some examples:

Using with intention

Using models to solve complex, targeted problems for large datasets

Using chatbots as a thought partner so that you can think through complex problems & see different angles or holes in your thoughts

Using AI to automate tedious tasks that are better done by machines & bog you down in your day-to-day life (personal or professional)

Using social media to connect with people in your community. As an adult, you end up having friends & family all over the country, you have friends with busy lives, social media is still one of the best ways to express yourself & stay in touch with those people as you maneuver that daily life.

Using recklessly

Trying to replace human creativity with the usage of generative AI

Humanizing chatbots (we don't need to refer to it as that) — it is a tool

Lack of transparency with algorithms & using them to manipulate what is considered 'trendy' or to censor people or control what they are viewing

Turning social media platforms into content farms

Using LLMs in your product just to say you have 'AI' when simpler, less energy-intensive tools would do the same job

Exploiting human weakness for engagement is not innovation. It is a lack of accountability & responsibility. It's a bitch ass move. We can build differently & we should.